Migrating to a cloud-native architecture is one of the most powerful ways to improve business agility. The modern cloud delivers virtually unlimited, on-demand compute power, enabling platforms to scale instantly to meet demand. It’s no surprise that 94% of companies worldwide already use cloud computing in some capacity, and 97% of IT leaders plan to expand their cloud systems in the next few years (source).

Yet many enterprises remain constrained by legacy, monolithic applications. These systems hold critical business logic but act as bottlenecks to digital transformation. Insurance applications, banking platforms, and other unique software systems have been built over the course of decades in languages like PowerBuilder, EGL, and Smalltalk, among others. These types of systems require a flexible, customizable, scalable, and agile modernization process that can be easily jump-started to deliver incremental results.

But how can you untangle a complex monolith without disrupting stable functionality and critical business operations? After all, carving out pieces of a monolithic system is a manual, labor-intensive, and time-consuming process. To move forward in today’s climate, organizations need a controlled, automated approach that ensures critical functions can be safely modernized, tested, and deployed in a timely manner.

Architectural breakthrough: microservices + micro-frontends

The optimal solution lies in a more modern architecture built on microservices and micro-frontends. Microservices are a web of independent, modular components that can be scaled, updated, and reused individually. Micro-frontends are user-facing components that can operate either independently or as a cohesive whole.

Modernizing the front end is just as important as modernizing straight business logic. Forrester Research finds that companies investing in UI/UX design see a $100 return for every $1 spent. Outdated interfaces remain one of the most immediate barriers for legacy applications, and micro-frontends directly address this need.

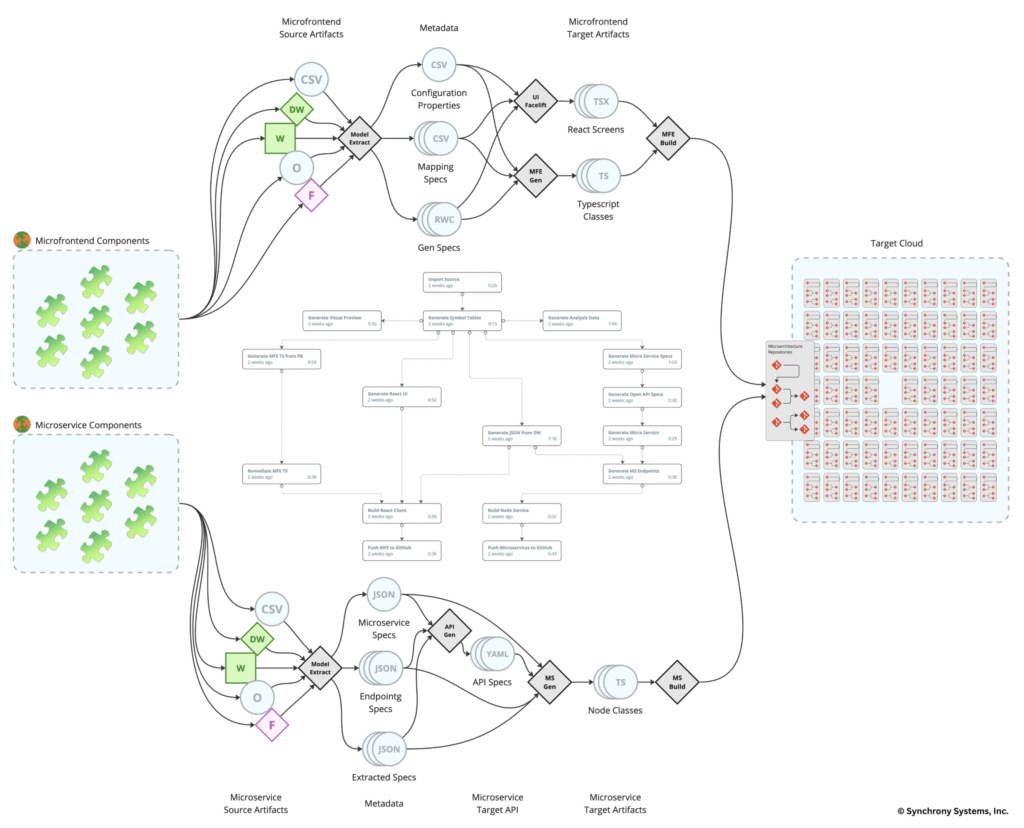

Synchrony Systems’ Modernization Lifecycle Platform (MLP) comes equipped with end-to-end automation for extracting “subsets” of business logic and user interface, and transforming them into reusable components for microservices and micro-frontends. This enables organizations to modernize their monolithic legacy applications into a hyperscale cloud architecture. By focusing on the high-value business functionality first, Synchrony helps accelerate modernization timelines so enterprises can deploy and test migrated functions and complete features continuously in months instead of years.

The illustration below shows how MLP orchestrates a modernization solution from a PowerBuilder monolithic architecture to a target microarchitecture with a TypedScript/React frontend and a TypedScript/Node.js backend as the target programming languages. (click image to enlarge)

How monoliths become microservices and micro-frontends

Rather than migrating monolithic legacy codebases wholesale or “as-is,” Synchrony offers a technology-assisted reengineering process and workflow that is iterative, incremental, and analysis-driven.

Analytics and application rationalization

First, an exhaustive analytical inspection of your legacy application is performed using modernization technology purpose-built to identify and extract high-value business scenarios and their execution paths. This analysis produces targeted, self-contained components (a.k.a. “subsets”) for every identified business scenario embedded inside each monolithic codebase. This extraction acts as the foundation for exposing the hidden application “component vocabulary” in terms of application layers, subsystems, and business functions. Powered by the newly exposed knowledge of your system, the extracted component architecture becomes the stepping stone that drives continuous and incremental modernization toward the target cloud component architecture.

Target architecture metadata harness

The new application component architecture is the first representation and visualization of the formerly monolithic application’s structural decomposition into a granular micro-frontend/microservice layer. The component model is used for generating the required metadata that drives the execution of every modernization service agent, such as: a) data access generation; b) business objects generation; and c) UI facelift generation into a reactive frontend. This is where client-specific requirements are also incorporated to align with IT’s model target architecture.

Fine-tuning the transformation engine

The breakdown of subsets further undergoes an iterative process that identifies micro-frontends, microservices, and common layers used by both. An interactive process identifies UI/UX requirements and service layer requirements (typically slated for data access) to produce highly granular, reusable, and scalable components on the target. The process results in custom-tailored code refactoring, transformation, and generation, rules knowledgebases (KBs) for target business services, and facelift rules for a modern, reactive target web frontend.

Orchestrating modernization workflows

The analytics, metadata harness, and fine-tuned transformation knowledgebase are all assembled into custom-tailored workflows. When executed end-to-end, they produce the desired target architecture, consisting of hundreds and often thousands of structural (repositories) and microarchitectural (microservices and micro-frontends) components. Workflows can be invoked on demand by users or triggered by specific events, such as commits to newly generated code or updates to the metadata harness or KB. The holistic integration of code, tools, and processes ensures that the modernization project runs efficiently and at scale.

Continuous modernization

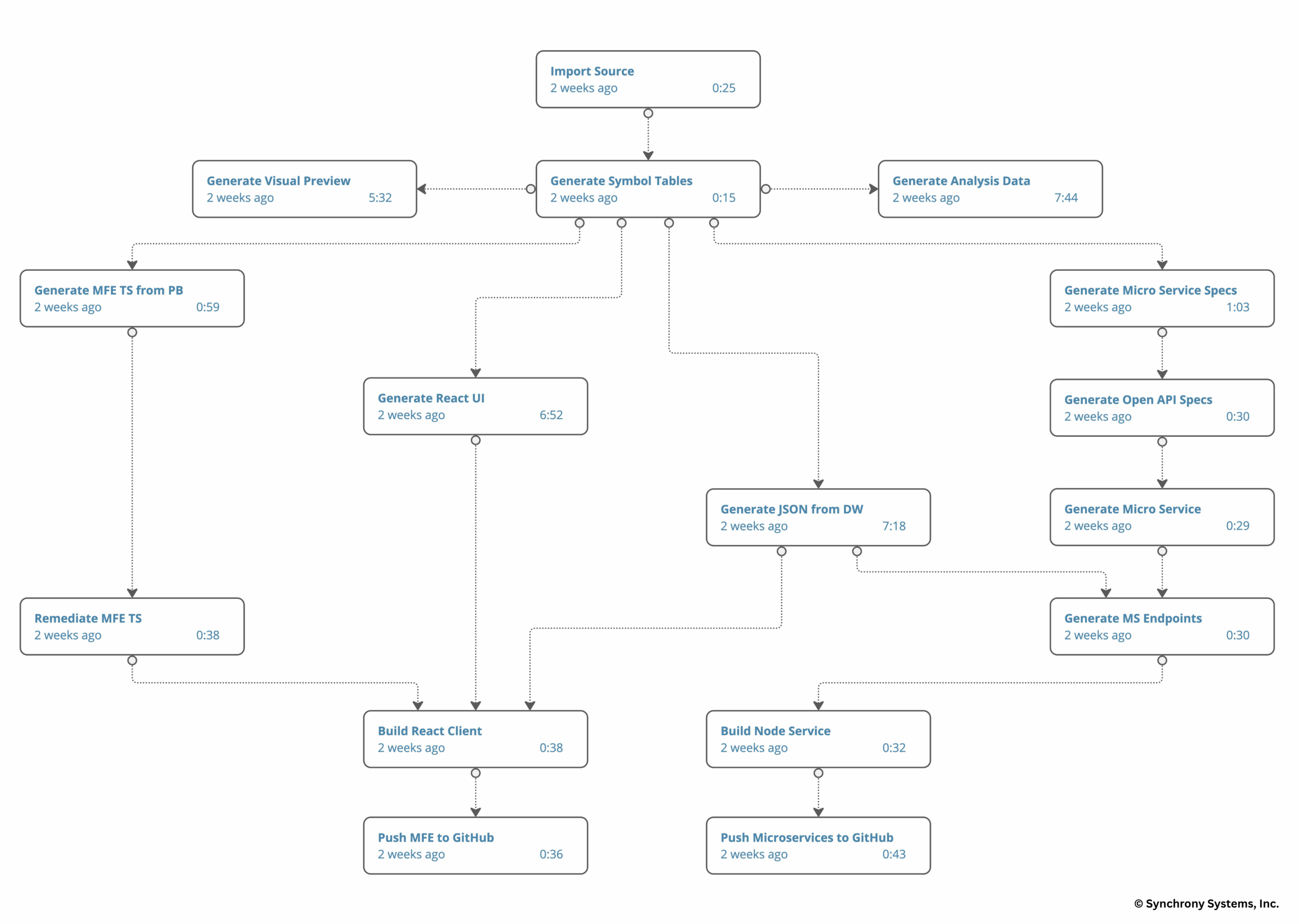

All units of execution inside a workflow, known as “autoflows,” can be thought of as pipelines of pipelines that enable continuous execution of the entire modernization lifecycle. The original monolithic application architecture is incrementally decomposed into independent, atomic, and stateless microservices and self-contained, reusable micro-frontends, ready for deployment into IT-specific cloud environments. The result is a transformed application with cloud-native architecture and modern UI/UX, preserving the original business logic and retaining 100% functional equivalence.

The illustration below shows an end-to-end customized MLP modernization workflow for monolithic client/server desktop applications. (click image to enlarge)

Why modernizing to microservices and micro-frontends improves speed and agility

- Customers can continuously extract high-value functionality and features from their legacy applications at their own pace and timeframe, until everything is modernized.

- Eliminating dead code and breaking the monolithic functionality into a web of independent microarchitecture components eliminates technical debt and fosters technological agility.

- Legacy applications get fully transformed into modern target architectures, unlike the like-for-like, “as-is” transformations that preserve the legacy architectural semantics, making it hard to be agile and scalable on modern target platforms.

- Microservices and micro-frontends are delivered incrementally as early milestones for customers to test and deploy into production piecemeal, rather than waiting for the entire application to be modernized.

- Built for flexibility and adaptation, Synchrony’s modernization platform conforms to the customer’s specific target architecture, tooling, and requirements (e.g., RESTful, Kubernetes, cloud infrastructure, API gateway, widget libraries, data access harnesses, etc.) rather than dictating a standardized solution.

Real-world transformation: from monoliths to microarchitectures

Modernizing monolithic application architectures into microarchitectures enables companies to untangle decades of core domain functionality and extract it into highly reusable components. Synchrony has helped dozens of teams extract and transform critical domain functionality and UI from their legacy client/server or host/mainframe monolithic architectures into reusable target microarchitectures.

PowerBuilder

A PowerBuilder client/server application subset (after removing dead code and selecting the initial set of high-value components) with a traditional monolithic architecture:

- Windows → 323

- Data Windows → 1,908

- Lines of Code (LOC) → 416K

Yields the following cloud microarchitecture:

- Micro-frontends → 47

- Microservices → 488

- Repositories → 1,650

Would you like to learn how this could be applied to your in-house legacy applications?

Contact us to meet with our senior modernization specialists for an in-depth conversation and consultation about the target architecture options your in-house portfolio of legacy applications can take.

Microarchitecture in legacy application modernization FAQ

This FAQ addresses common questions about our technology, drawing from our expertise in modernization. Whether you’re a developer, architect, or CTO, these insights can help you understand how to revitalize your tech stack without disrupting operations.

What is microservices extraction in legacy application modernization?

Synchrony Systems’ Microservices Extraction technology is an automated tool that untangles complex legacy monoliths and converts them into a network of reusable microservices and microfrontends. It focuses on extracting subsets of business logic and user interfaces from languages such as PowerBuilder, EGL, and Smalltalk. This process creates independent, modular components that can be scaled, updated, and deployed individually in a cloud-native environment, such as TypeScript/React for frontends and TypeScript/Node.js for backends.

Why should enterprises modernize legacy applications into microarchitectures?

Legacy applications are, by definition, outdated. They are built on aging infrastructure and rely on a talent pool nearing retirement to keep running. These systems inherently limit scalability, agility, and innovation. By modernizing to microservices and micro-frontends, organizations can leverage the cloud’s on-demand compute power, enabling instant scaling to meet demand. This shift eliminates technical debt, fosters technological agility, and allows for incremental improvements without a full overhaul.

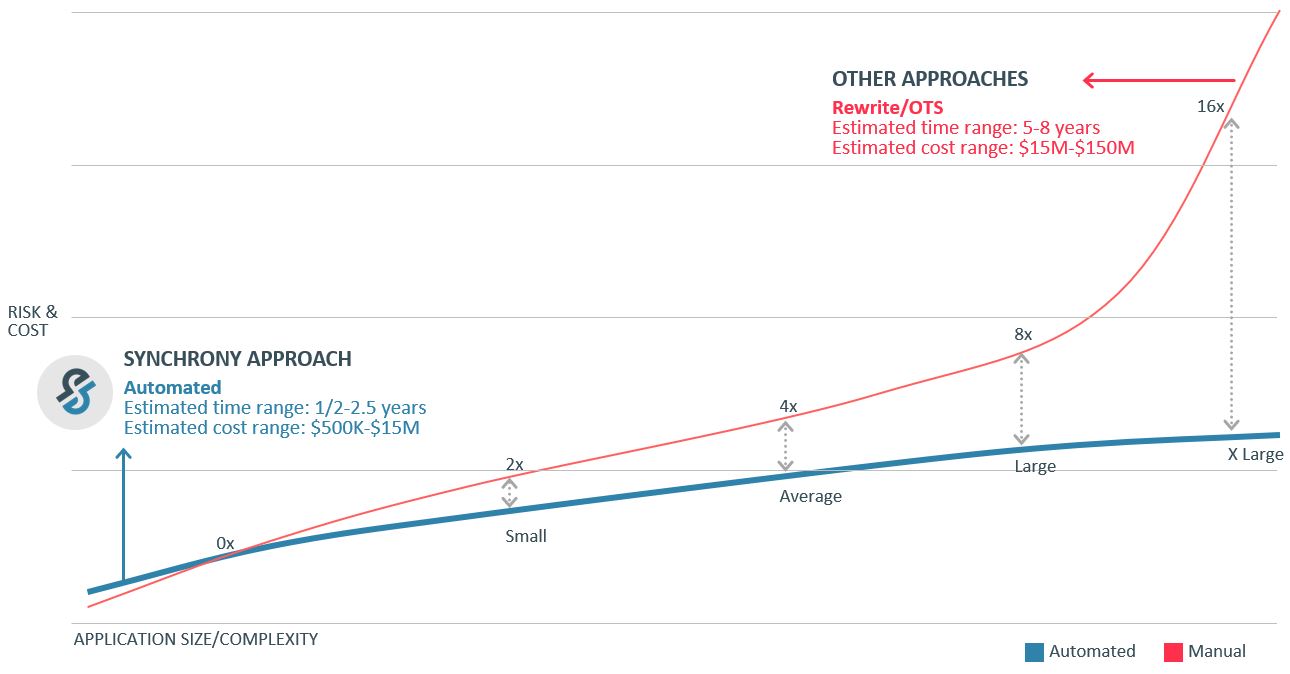

How does Synchrony Systems’ platform differ from traditional modernization approaches?

Traditional methods often involve manual, labor-intensive processes or “as-is” migrations that preserve outdated semantics, making it hard to achieve true agility. Synchrony’s platform uses end-to-end automation for an iterative, incremental, analysis-driven reengineering process. It avoids wholesale migrations by focusing on high-value business scenarios first, delivering functional equivalents in months rather than years. The result is a hyperscale cloud architecture tailored to your specific requirements, including RESTful services, Kubernetes, and custom API gateways.

What are microservices and micro-frontends, and why are they important?

Microservices are independent, modular backend components that handle specific business functions, allowing them to be scaled, updated, or reused without affecting the entire system. Microfrontends are user-facing UI components that can operate standalone or integrate seamlessly. Together, they create a flexible “web” of components that improve speed, reusability, and overall application performance.

What is Synchrony’s step-by-step process for microservices extraction?

The process is built into the Modernization Lifecycle Platform (MLP) and includes several key phases:

- Analytics and application rationalization: An exhaustive analysis identifies high-value business scenarios and extracts self-contained components, revealing the application’s hidden “component vocabulary” across layers, subsystems, and functions.

- Target architecture metadata harness: This generates metadata to drive modernization agents, incorporating client-specific requirements for data access, business objects, and reactive UI generation.

- Fine-tuning the transformation engine: Subsets are broken down into granular micro-frontends and microservices. An interactive process refines UI/UX and service layer needs, creating custom refactoring rules and knowledgebases.

- Orchestrating modernization workflows: Analytics, metadata, and transformation rules are assembled into custom workflows that produce structural repositories and microarchitectural components. These can be triggered on demand or by events like code commits.

- Continuous modernization: Workflows run as “autoflows” (pipelines of pipelines), incrementally decomposing the monolith into atomic, stateless components ready for cloud deployment. The final output is a fully transformed application that preserves business logic and is 100% functionally equivalent.

If the modernization process is automated, how does it ensure safety?

Synchrony’s MLP emphasizes controlled automation to avoid disrupting stable functionality. It uses purpose-built technology for inspection, extraction, and transformation, ensuring critical functions are modernized, tested, and deployed safely and accurately. Automation handles the heavy lifting, but the process includes iterative fine-tuning and interactive elements to incorporate your team’s input throughout the modernization process, minimizing risks and maintaining operational continuity.

Can modernization be done incrementally without a big-bang approach?

Absolutely. MLP enables continuous extraction of high-value functionality at your own pace. Microservices and microfrontends are delivered as early milestones, enabling testing and piecemeal production deployment. This eliminates the need to wait for the entire application to be modernized, reducing downtime and accelerating timelines from years to months.

What benefits does this technology provide in terms of speed and agility?

- Reduced technical debt: Breaks down monoliths into independent components, eliminating dead code and enabling easier updates.

- Improved scalability and flexibility: Components conform to your target architecture (e.g., cloud infrastructure, widget libraries), fostering reuse across teams.

- Faster time-to-market: Incremental deployments mean quicker value realization from high-priority features.

- Improved UI/UX: Transforms outdated interfaces into modern, reactive frontends, enhancing user experience and ROI.

- Full transformation: Unlike “as-is” methods, it delivers a truly cloud-native architecture for long-term agility.

Which legacy languages and systems does Synchrony support?

MLP is designed for a variety of legacy systems, including client/server desktop applications written in languages such as PowerBuilder, EGL, Smalltalk, VisualGen, COBOL, and more. It’s flexible and customizable, able to handle unique monolithic architectures across insurance, banking, and other industries.

How can I get started with Synchrony Systems’ Microservices Extraction technology?

Contact us to connect with our senior modernization specialists.